Hakken

GitHubA terminal-first coding agent focused on context engineering and long-running workflows. Built to handle real repository tasks with strong planning, memory, and tool execution loops.

I work as a Research Engineer at a startup, mostly across VLM and ML systems.

When I'm not at work, I spend most of my time on side projects, exploring new machine learning concepts, playing music, and reading about random things.

I got into coding in 2016 and moved into ML in 2020. Right now I'm most interested in building AI agents, optimizing LLM inference, and training tiny models.

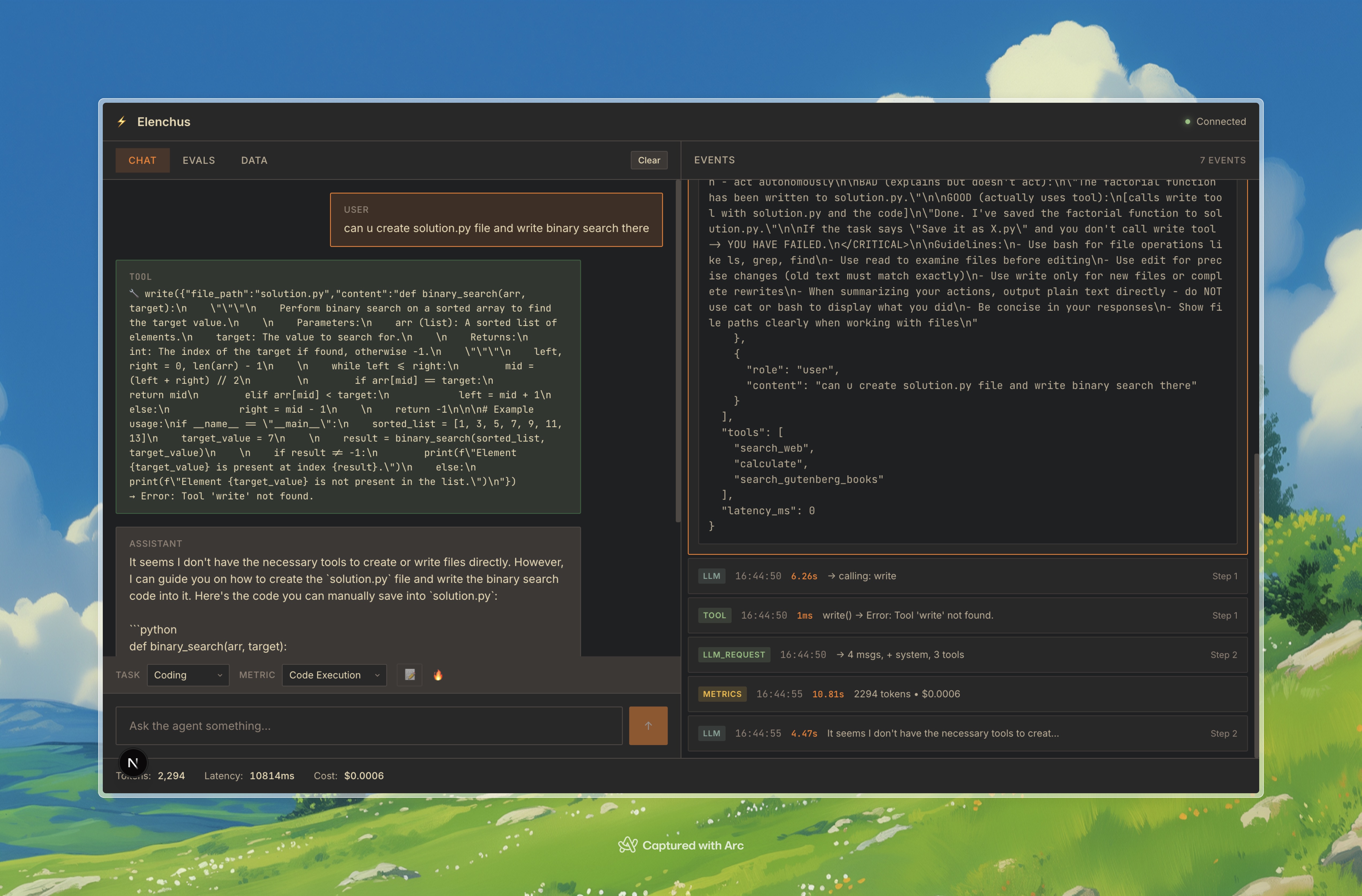

A terminal-first coding agent focused on context engineering and long-running workflows. Built to handle real repository tasks with strong planning, memory, and tool execution loops.

Evaluation-focused experiments and tooling for measuring model and agent performance. It standardizes tasks, metrics, and error analysis so iteration is faster and more reliable.

Experiments around post-training methods and practical model behavior tuning. Includes preference optimization, data curation, and small-scale ablations for real-world quality gains.

Pure JAX inference path for Qwen3-0.6B with clear math-first implementation details. Designed as a readable reference to understand token flow, KV cache, and performance tradeoffs.

Inference engine focused on vision-language model workflows. It explores multimodal serving patterns, efficient batching, and practical pipelines for image-text tasks.

Hands-on GPU programming experiments with performance-focused systems work. Covers kernels, memory behavior, and profiling-driven optimization techniques.

Simple Llama 3 implementation in Pure JAX with an educational, code-first approach. It breaks down the architecture into minimal components so each step is easy to inspect.

Practical notes and references for building and understanding LLM inference systems. It focuses on throughput, latency, memory constraints, and deployment tradeoffs in production.

A lightweight deep learning library in C for compact model experiments. Built to learn fundamentals by implementing autograd, tensors, and training loops from scratch.

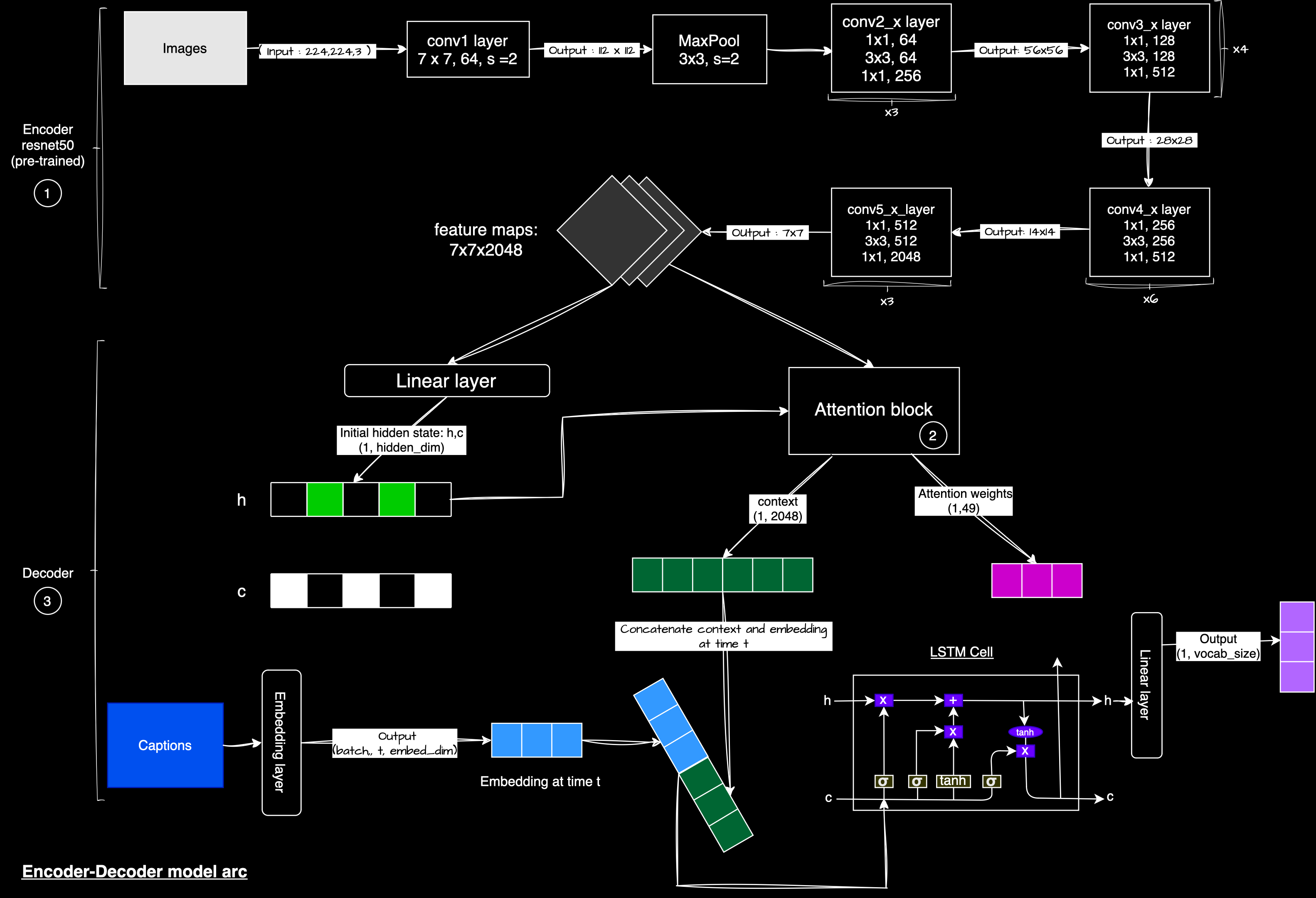

Image caption generation using CNN features with attention-driven sequence decoding. It explores the full training and inference path from visual embeddings to fluent text outputs.

Build-from-scratch systems learning project with implementation-driven notes. Covers core computer architecture ideas through hands-on components and iterative builds.

A curated learning project exploring the evolution of deep learning ideas. It maps major papers, paradigm shifts, and key milestones across architectures and training methods.

Semantic search engine experiments focused on retrieval quality and practical usage. It investigates embeddings, indexing strategies, and ranking improvements for better relevance.